by Pete Johnston

I’ve been working on a first attempt at processing the Encoded Archival Description (EAD) XML output provided by Karen from their CALM database in order to generate RDF data for the Mass Observation Archive. My starting point has been the work done within the LOCAH project, to which I’ve also been contributing, and which is also transforming EAD data into linked data.

I’m making use of the same general approach as that we’ve used within the LOCAH project, so as background to this post, it’s probably worth having a look at some of the relevant posts on the LOCAH blog and/or at the initial dataset they have just released.

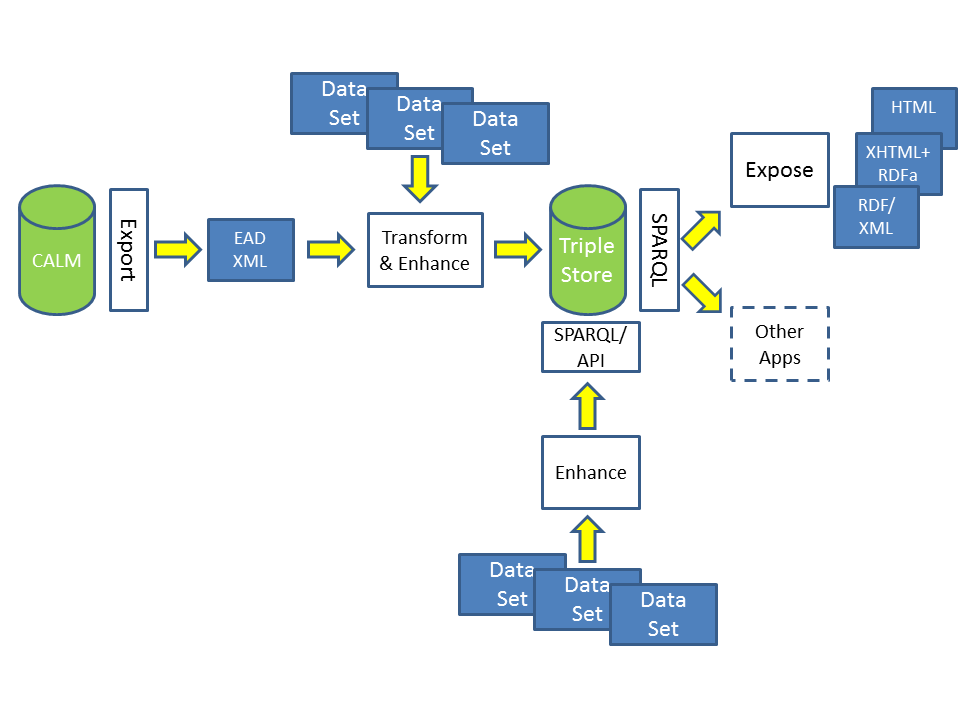

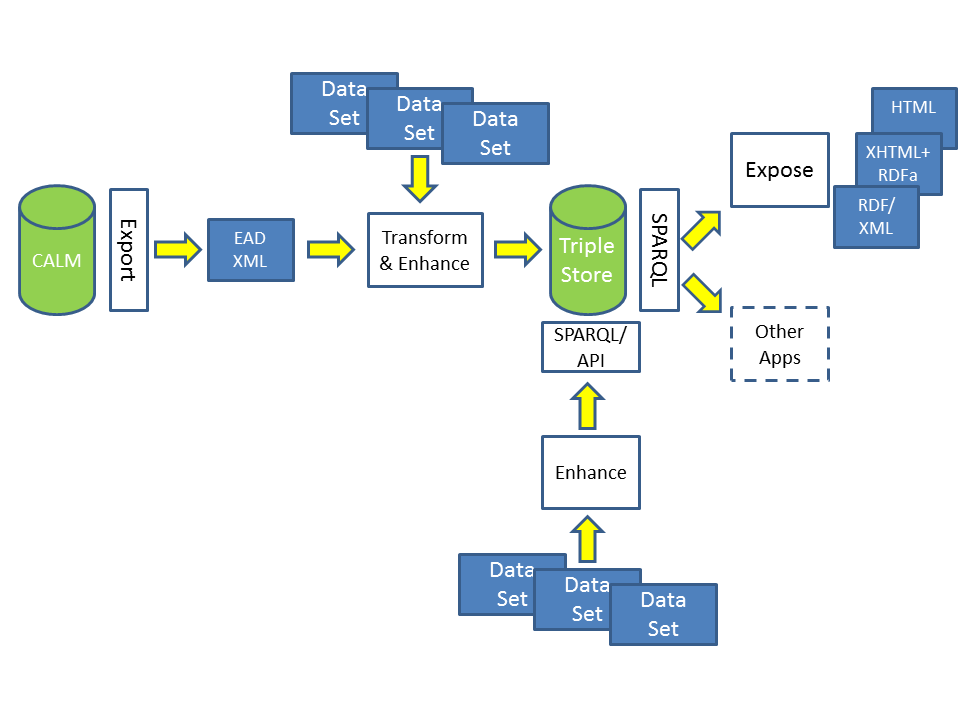

The “workflow” for the SALDA/MOA case is similar to that described in the first part of this post, with an additional preliminary step of exporting data from the CALM database into the EAD XML format. And as I’ll explain further below, for the SALDA case, the “transform” step will also include a small element of what I was calling “enhancement” – the augmentation of the EAD content with some additional data.

We’re making use of (more or less – more on this also below) the same model of “things in the world” as that we’ve applied in the LOCAH project (see these three posts for details 1, 2, 3); the same patterns for URIs for identifying the individual “things” – within a University of Sussex URI-space, as Karen and Chris have discussed in recent posts here; and (more or less) the same RDF vocabularies for describing those “things”.

EAD and the LOCAH and SALDA EAD data

As I noted in the first of those posts over on the LOCAH blog the EAD format is, by design, a fairly “flexible” and “permissive” XML format. It was designed to accommodate the “encoding” of existing archival finding aids of various types and constructed by different cataloguing communities, some with practices and traditions which varied to a greater or lesser degree. EAD also allows for variation in the “level of detail” of markup that can be applied, from a focus on the identification of broad structural components to a more “fine-grained” identification of structures within the text of those components. As a result the structure of EAD XML documents can vary considerably from one instance to the next.

The LOCAH project is dealing with EAD data aggregated by the JISC Archives Hub service. This is data provided by multiple data providers, in some cases over an extended period of time, and sometimes using different data creation tools – and one of the challenges in LOCAH has been dealing with the variations across that body of data. SALDA, on the other hand, is dealing with data a single data source, under the control of a single data provider – the MOA data is actually exported from the CALM database in the form of a single EAD document, albeit quite a large one!

So while the LOCAH input data includes EAD documents using slightly different structural and content conventions, for SALDA, that structure is regular and predictable, and furthermore some element of “normalisation” of content is implemented through the rules and checks performed by the CALM database application.

So far, so good, then, in terms of making the MOA EAD data relatively straightforward to process.

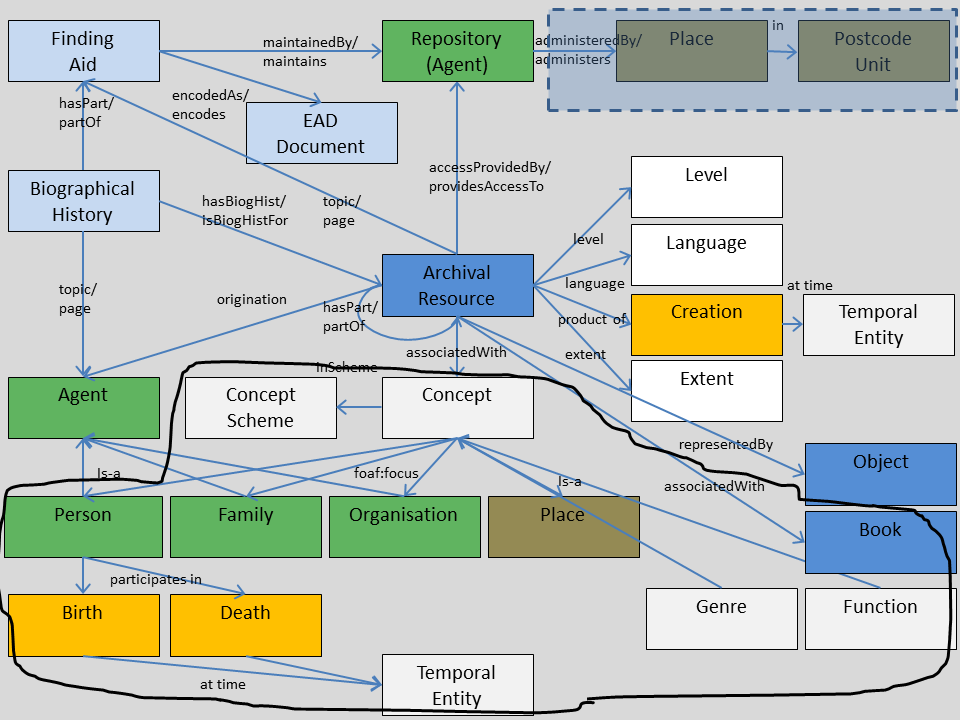

Index Terms

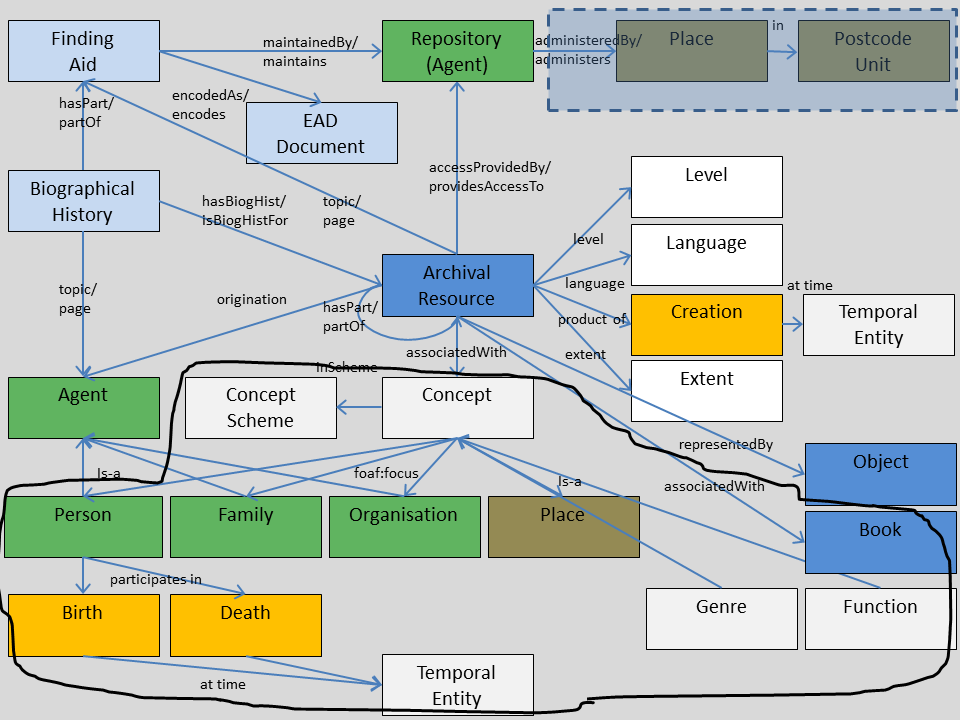

The data creation guidelines for contributors to the Archives Hub recommend the provision of “index terms” or “access points” using the EAD controlaccess element – names of topics, persons, families, organisations, places, genres or functions, whose association with the archival resource is potentially useful for people searching the finding aid. Those names are (in principle, at least!) provided in a “standardised” form (i.e. either drawn from a specified “authority file” of names or constructed using a specified set of rules) so that two documents using the same authority file or the same rules should provide the same name in the same form. In the process of transforming EAD into RDF within the LOCAH project, the controlaccess element is a significant source of information about “things” associated with the archival resource. Below is a version of the graphical representation of the LOCAH model, taken from this post. Data about the entities circled in the lower part of the diagram is all derived from the LOCAH EAD controlaccess data.

In the MOA data, however, no controlaccess terms are provided. Talking this over with Karen and Chris recently, however, made it clear that there are some associations implicit in the MOA data, and there are some “hooks” in the data which can provide the basis for generating explicit associations in the RDF data. This is probably best illustrated through some concrete examples.

“Topic Collections”

One section of the Mass Observation Archive takes the form of a sequence of “Topic Collections”, in which documents of various types are grouped together by theme or subject, the name of which forms part of the title of a “series” within the section, i.e. the series have titles like:

- TC1 Housing 1938-48

- TC6 Conscientious Objection & Pacifism 1939-44

- TC7 Happiness 1938

Although the titles are encoded in the EAD documents as unstructured text (as the content of the EAD unittitle element), the text has a consistent/predictable form of: code number, name of topic, date(s) of period of creation.

We can take advantage of this consistency in the transformation process and, with some fairly simple parsing of the text of the title, generate a description of a concept with its own URI and name/label (e.g. “Housing”, “Conscientious Objection & Pacifism” or “Happiness”), and a link between the archival resource and the concept. (For this case, the dates are provided explicitly elsewhere in the EAD document and already handled by the transformation process.)

Series by Place

Within one of the “Topic Collections” (on air raids), sets of reports are grouped by place, where the name of the place is used as the title of the “file”. So again, it is straightforward to generate a small chunk of data “about” the place with its own URI and name/label, and a link between the archival resource and the place.

In both this case and the “topic collections” case, we can also be quite specific about the nature of the relationship between the archival resource and the concept or place. In the LOCAH case, we’ve limited ourselves to making a very general “associated with” relationship between the archival resource and the controlaccess entity, on the grounds that the cataloguer may have made the association with the archival material based on many different “real world” relationships. For these cases in SALDA, we can be more specific, and say that the relationship is one of “aboutness”/has-as-topic, which can be expressed using the Dublin Core dcterms:subject property.

Directives by Date

Another section of the archive lists responses to “directives” (questionnaires) by date. In these cases the dates are not provided separately in the EAD data, but again the consistent form of the title makes it relatively straightforward to extract and present the dates explicitly in the RDF data.

Keywords

Each of the above examples exploits some implicit structure in text content within the EAD document. A second approach we’ve applied is to scan the content of some EAD elements for words or phrases that can be mapped to specific entities (concepts, persons, organisations, places). In making this mapping, we’re really taking advantage of the fact that for the SALDA case we have a fairly well-defined context or scope, defined by the scope of the archival collection itself. So within that context, we can be reasonably confident that an occurrence of the word “Churchill” is a reference to the war-time Prime Minister, rather than to another member of his family, or a Cambridge college, or an Oxfordshire town.

Because this process involves matching to a set of known concepts/places/persons/organisations, and because it’s a relatively short list, I’ve taken advantage of this to extend the “lookup table” to include some URIs from DBpedia, Geonames and the Library of Congress LCSH dataset, which I use to construct owl:sameAs or skos:closeMatch/skos:exactMatch links to external resources as part of the transformation process.

“Multi-level description” and “Inheritance”

One of the general issues these approaches bring me back to is the question of “multi-level description” in archival description, and which I discussed briefly in a post on the LOCAH blog. Traditionally archival description advocates a “hierarchical” approach to resource description: a conceptualisation of an archival collection as having a “tree” structure single, with a finding aid document providing information about an aggregation of records, then about component subsets of records within that aggregation, and so on, sometimes down to the level of individual records but often stopping at the level of some component aggregation.

This “document-centric” approach carries with it an expectation that the description of some “lower level” unit of archival material is presented and interpreted “in the context of” those other “higher level” descriptions of other material. And this is reflected in a principle of “non-repetition” in archival cataloguing:

At the highest appropriate level, give information that is common to the component parts. Do not repeat information at a lower level of description that has already been given at a higher level.

There is some suggestion here of information of lower-level resources implicitly “inheriting” “common” characteristics from their “parent” resources – unless they are “overriden” in the description of the “lower-level” resource.

In practice, however, this “inheritance” is more applicable to some attributes than others: it may work for, say, the name of the holding repository, but it is less clear that it applies to cases such as the controlaccess “index terms”: it may be appropriate/useful to associate the name of a person with a collection as a whole, but it doesn’t necessarily follow that the person has an association with every single item within that collection.

The “linked data” approach is predicated on delivering information in the form of “bounded descriptions” made up of assertions “about” individual subject resources. So in transforming EAD data into an RDF dataset to support this, we’re faced with the question of how to deal with this “implicitly inherited” information: whether to construct assertions of relationships only for the resource for which they are explicitly present in the EAD document, or whether also to construct additional assertions for other “descendent” resources too, on the basis that this is making explicit information that is implicit in the EAD document.

In the LOCAH work, we’ve tended to take a fairly “conservative” approach to the “inheritance” question and worked on the basis that, in the RDF data the concept, person, place, etc named by a controlaccess term is associated only with the archival resource with which the term is associated in the EAD document.

For the SALDA/MOA data, I think an argument can be made – at least for some of the cases discussed above – for making such links for the “descendent” component resources too. For the “topic collections”, for example, it is a defining characteristic of the collection that each of the member resources has the named concept as topic. And a similar case might be made for the “place-based” series.

For the keyword-matching cases, an assumption that the association can be generalised to all the “descendent” resources would, I think, be more problematic.

The “foaf:focus question”

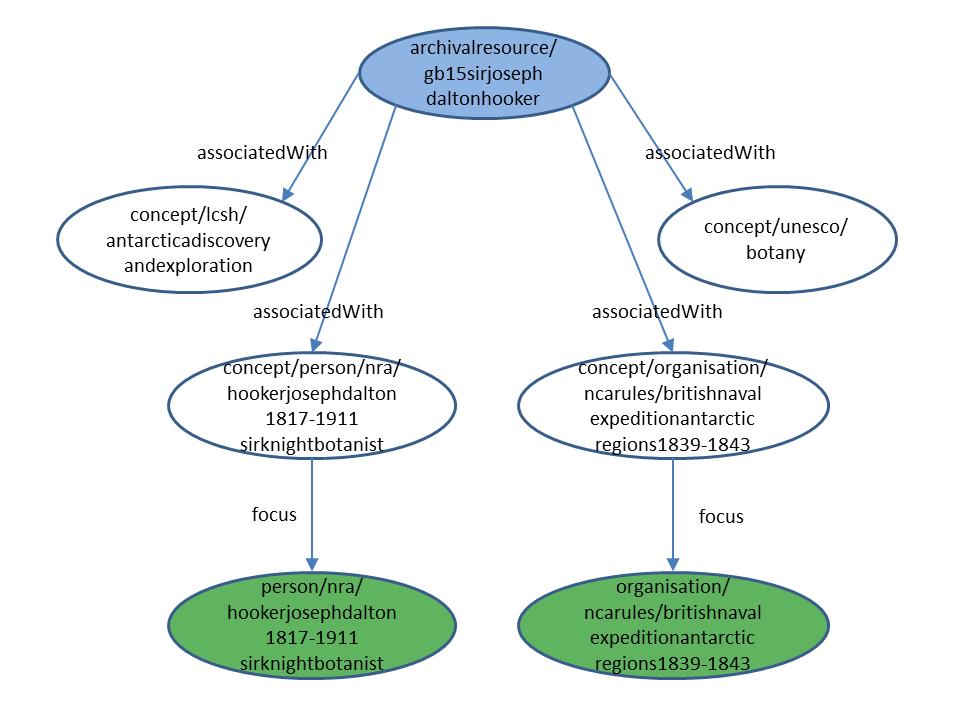

In the Archives Hub data that LOCAH is using, the controlaccess terms are (mostly at least) drawn from “authority files”. This is reflected in the LOCAH data model in a distinction between the “conceptualisation” of a person, organisation or place that is captured in a thesaurus entry or authority file record, as separate from the actual physical entity. So for the person/organisation/family/place cases, in the LOCAH transformation process, the presence of an EAD controlaccess term results in the generation of two URIs and two triples, the first expressing a relationship (locah:associatedWith) between archival resource and concept, and the second between concept and entity (person, organisation, place). This second relationship is expressed using a (recently introduced) property from the Friend of a Friend (FOAF) RDF vocabulary, foaf:focus.

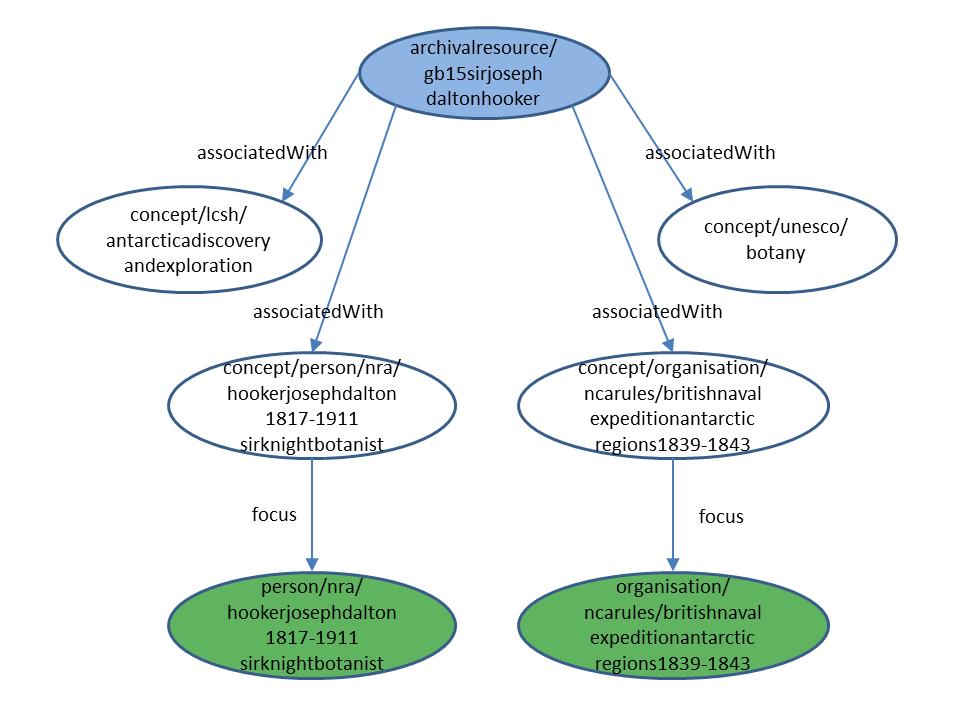

For a concrete example from the LOCAH dataset, consider the case of the Sir Joseph Dalton Hooker collection, which is identified by the URI http://data.archiveshub.ac.uk/id/archivalresource/gb15sirjosephdaltonhooker. The description of that “Archival Resource” shows that the collection is “associated with” four other resources, identified by the following URIs:

http://data.archiveshub.ac.uk/id/concept/lcsh/antarcticadiscoveryandexploration

http://data.archiveshub.ac.uk/id/concept/person/nra/hookerjosephdalton1817-1911sirknightbotanist

http://data.archiveshub.ac.uk/id/concept/organisation/ncarules/britishnavalexpeditionantarcticregions1839-1843

http://data.archiveshub.ac.uk/id/concept/unesco/botany

If we look in turn at the descriptions of those resources, we see that they are all concepts (i.e. instances of the class skos:Concept) – even the second and third cases. And in those two cases the concept is the subject of a “foaf:focus” relationship with a further resource, of type Person and Organisation, respectively:

http://data.archiveshub.ac.uk/id/person/nra/hookerjosephdalton1817-1911sirknightbotanist

http://data.archiveshub.ac.uk/id/organisation/ncarules/britishnavalexpeditionantarcticregions1839-1843

I’ve tried to depict this in the graph below. I’ve omitted the rdf:type arcs for conciseness, and relied on colour to indicate resource type (blue = Archival resource; white = Concept; green = Agent (Person or Organisation).

So, the question is how/whether this applies for the SALDA/MOA cases I describe above.

For the “topic collections” case, the link is simply to a concept (a member of a “MOA Topics” “Concept Scheme”), and there isn’t a separate physical entity involved.

For the “place series” case, in theory we could introduce a set of concepts but I’m not sure there is any value in doing so – there is no external thesaurus/authority file involved, and I think it’s reasonable to simply make the direct link between archival resource and place.

The keyword matching case actually covers various sub-cases, and I need to think harder about them, but broadly I think we should try to avoid the complexity of the “intermediate” concept where it isn’t really necessary.

Summary

In short, while I need to do some more work on it, it’s been relatively straightforward to apply the model and the transformation processes developed in LOCAH to the MOA data.

What is perhaps more interesting is how we’ve “specialised” the fairly “general” LOCAH approach, based on Karen’s “local knowledge” of specific characteristics of the MOA data.

While it’s perhaps premature to draw general conclusions from this single case, I do wonder whether that the nature of the EAD format and the ways it is used may mean that this combination of the general and the local/specific turns out be a common pattern e.g. for a different dataset, a different set of “local”/specific characteristics might be identified and exploited in a similar fashion. Amongst other things, I should probably think about how this is reflected in the transformation process, e.g. whether it is possible to “modularise” the XSLT transform in such a way that it the “general” parts are separated from the “specific” ones, and it is easier to “plug in” versions of the latter as required.