Welcome to April and May’s 2026’s Spotlight on AI in Education bulletin. With how fast things are moving, this will help you cut through the noise and catch what’s important. The bulletin highlights on-the-ground practice, institutional perspectives, and trends in generative AI use across the sector and beyond. We hope you find this useful.

If you have anything you’d like to contribute or see in this bulletin, please email EE@sussex.ac.uk

On-the-ground at Sussex

Sussex Education Festival 2026

The fourth Sussex Education Festival happened last week at the Student Centre.

The festival is for anyone involved in delivering education at Sussex and provides a supportive and collaborative space to celebrate and share experiences, research and reflections on teaching, learning and assessment. This year was a well-attended event with lots of interesting and thought-provoking talks on a range of topics.

There were three AI sessions as part of the programme

1.Using an AI-generated reception class to enhance deliberate practice in initial teacher Education. Using an AI-generated reception class, Hayley Preston-Smith explored how trainee teachers could safely practise classroom activities, behaviour management, report writing and other early-years skills, while also benefiting from peer reflection and more equitable learning experiences.

2.Teaching gen-AI prompting to develop science communication skills. Greig Joilin (Life Sciences) Greig examined how teaching students to use and critique generative AI prompts can support science communication skills, while highlighting the need for stronger critical thinking, self-evaluation, and a staged AI literacy curriculum across degree programmes.

3.Designing with AI: A backward -design and TBL approach focused on inputs, not outputs. Gabriella Cagliesi (USBS) Gabriella argued that, through backward design and clear pedagogical rules, AI can move beyond being a passive chatbot to become an active teaching partner that supports questioning, reflection, communication and critical engagement with AI-generated answers.

Next AI Community of Practice 8th June

The Teaching with AI Community of Practice will meet again Monday 8th June, when we look forward to hosting a salon-style event with opportunities to reflect on 2025/26 and discuss your ideas, experiences and questions with each other.

We will meet in-person on the University Campus, in the Library Teaching Room (Ground Floor, by the step-free access next to IDS). Please register to attend by completing this form. By completing the form it is particularly useful for us to accommodate any access requirements.

Read more about our previous AI CoPs on the blog.

AI in education hub

Please review our new and improved AI in education hub. Here you will find everything you need to know about the use of artificial intelligence (AI) in teaching, learning and assessment at The University of Sussex.

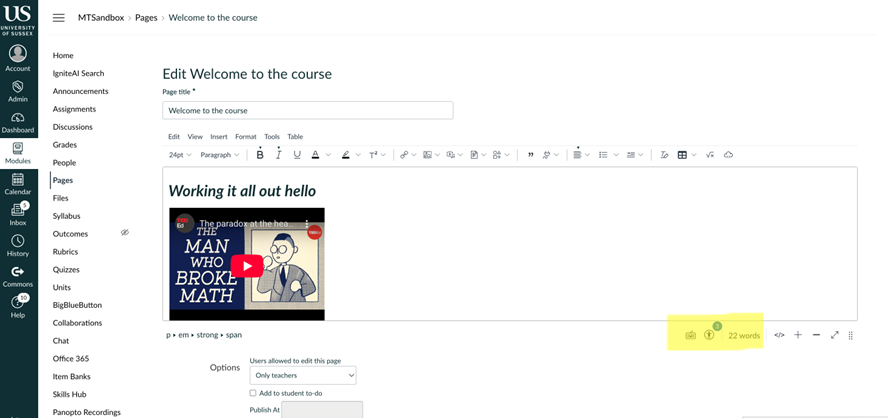

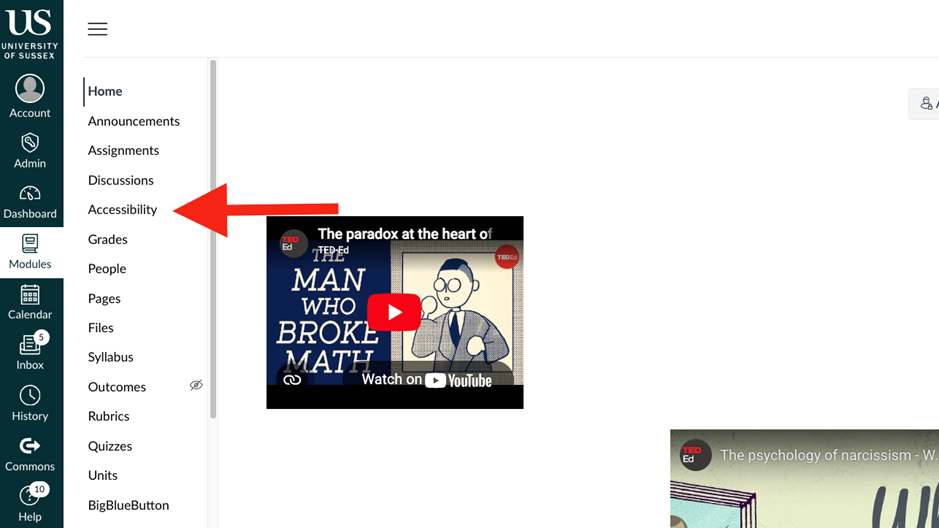

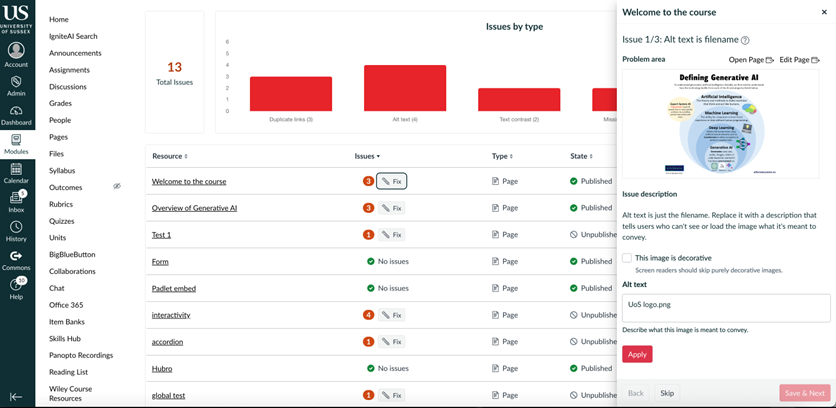

Self enrol to Educational Enhancement’s Canvas module AI at Sussex

Sussex staff and students only) to support your use of AI in your teaching and learning, Please remember that CoPilot and other tools are constantly being developed, and the material might not always reflect how the tool operates on a given day.

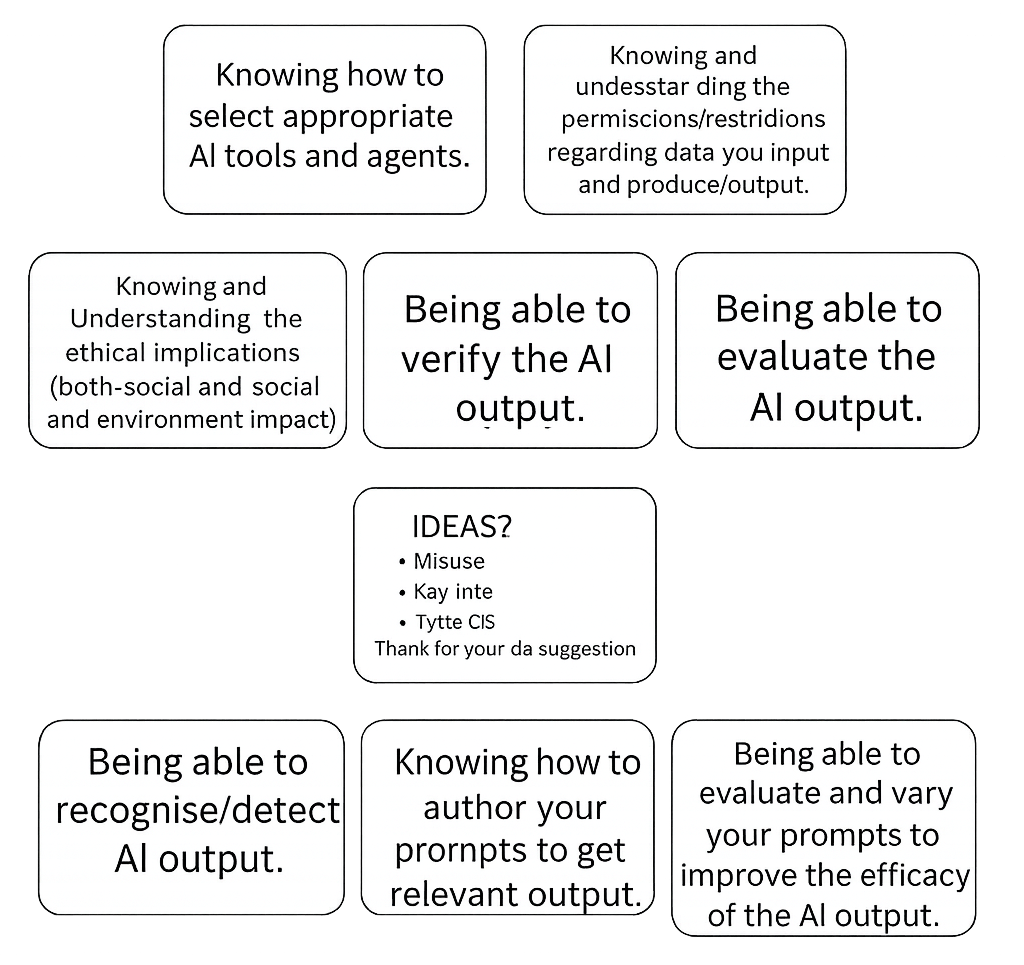

The JISC Building Digital Capabilities Toolkit is available to all Sussex staff and students, and includes guidance on how to improve your own digital skills. Access the toolkit here. The specialised question set looking AI focuses on

- AI and digital proficiency

- AI and digital productivity

- AI and information and data literacy

- AI and digital communication

- AI and collaboration and participation

- AI and digital creativity

- Responsible AI

You can read more about the University of Sussex Principles of use of AI.

Find out more and book your place on the Summit.

Across the sector

Topic; “Is AI the new plastic?”

From my reading this month the concept of values and our AI driven future have come up on both the micro and macro level and ultimately looking at the enduring quality of AI use. Here are two articles talking about this subject:

Values-led Generative AI in Design Education: A Toolkit for Confident, Critical Practice

In this post it looks at how Nottingham Trent University, University of the Arts London, and Norwich University are exploring generative AI and how it is reshaping art and design education. They are attempting to respond by developing practical support to help students and staff integrate AI tools confidently while addressing authorship, ethics, originality, and creative practice. [altc.alt.ac.uk]

The resulting open Art, Design & Artificial Intelligence: Educator’s Toolkit offers design-cycle activities, case studies, and reflective “spark card” prompts grounded in nine shared AI design values (e.g., human creativity first, transparency, inclusion, process over output) to encourage critical, values-led use of generative AI in teaching.

This statement resonated with me and is something I wish to explore more. “Whilst technologies evolve rapidly, the values cultivated through creative, critical and material practice are more enduring” These kind of activities described in the article put the process and the skills front and centre while taking emphasis off the final product are important reminders, for me personally, as I develop my professional work with AI as tool to support.

The tensions of AI shouldn’t come as a surprise in a system wired for speed and output

In this WonkHE article the author talks about the tension in the HE sector about AI use isn’t just about governance or a policy problem, they argue it’s a “dopamine problem,” because AI offers fast cognitive relief (turning friction, uncertainty, and drafting effort into neat output). Instead, universities need to rethink what they value and design for slower, difficulty, revision, and critical engagement especially as AI tools become embedded as invisible infrastructure [wonkhe.com]

“We have seen this pattern before. Fast fashion was convenient before it was environmentally destructive. Social media was connective before it was corrosive. Plastic was revolutionary before it was everywhere… forever. In each case, the benefits were immediate and personal; the costs were distributed, delayed and easier to ignore. We normalised first and interrogated later.”

Novelty, assistance and potential embarrassment: patterns of change in AI use amongst teachers. April 2026

Martin Compton’s article looks at a 12-month-old post asking ‘What GenAI tools can do well’ has had hundreds of responses from educators around the world. Compton later asked contributors in the post “……What are your top personal and/or professional uses of generative AI tools?”

Compton reports” The teachers, lecturers and students shared examples of what they were doing, along with anxieties and uncertainties. These comments revealed messy, sometimes confused pictures that reflect anything but consensus. Rather than any sort of transformation either to digital utopia or intellectual ruin there is nevertheless a sense of change over time that suggests increased normalisation and experimentation alongside heightened wariness.” [HEducationist]

Further Afield

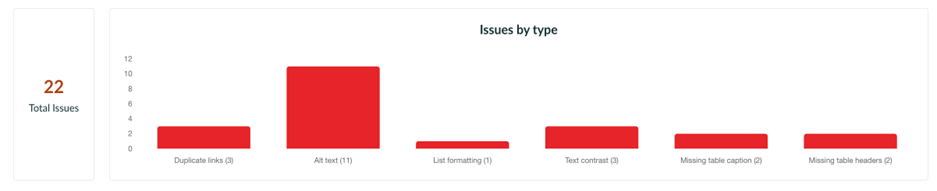

Global Accessibility Awareness Day (GAAD) 21st May 2026

Global Accessibility Awareness Day, is a worldwide initiative that encourages everyone to talk, think, and learn about digital access and inclusion for the more than one billion people living with disabilities. By spotlighting the importance of accessible design across web, mobile, and emerging technologies such as AI, GAAD highlights how inclusive digital experiences can remove barriers and create more equitable opportunities for all.

For more information about events, news and resources visit the website

The University of Kent’s Digitally Enhanced Education webinars!

These webinars are organised into playlists based on different elements of AI use. The employability list has nine episodes with Q&A elements. These are all available for free on YouTube with other playlists focusing on topics about AI digital technologies for teaching.

In case you missed it

Other links on the topic of AI in teaching and learning you may have missed.

- Join the Teaching and Learning with GenAI Community: If you’d like to join the community and be first to hear about events. Get in touch with us and we can add you to the list and dedicated MS Teams community. www.tinyurl.com/sussex-ai-cop

- Disclaimer on any tools not supported at Sussex. Please do not share Sussex, student, colleague, sensitive or personal data via these platforms. Not being supported means they have not passed stringent Data Protection assessments and could put you at breach of policy and legislation. For a list of supported platforms for teaching and learning please visit the Educational Enhancement website.

This was a Spotlight on AI in Education update from Educational Enhancement